Originally published on Indie Stash

This post was inspired by a publication by Vlad Magdalin of Webflow.

Unlike web designers who now can build websites from scratch visually, without a line of code, video game localizers still work in the black and white of their translation tools, without visual clues.

Hopefully, this post will inspire someone to create a WYSIWYG or visual translation app.

First, let’s take a look at how other creatives work.

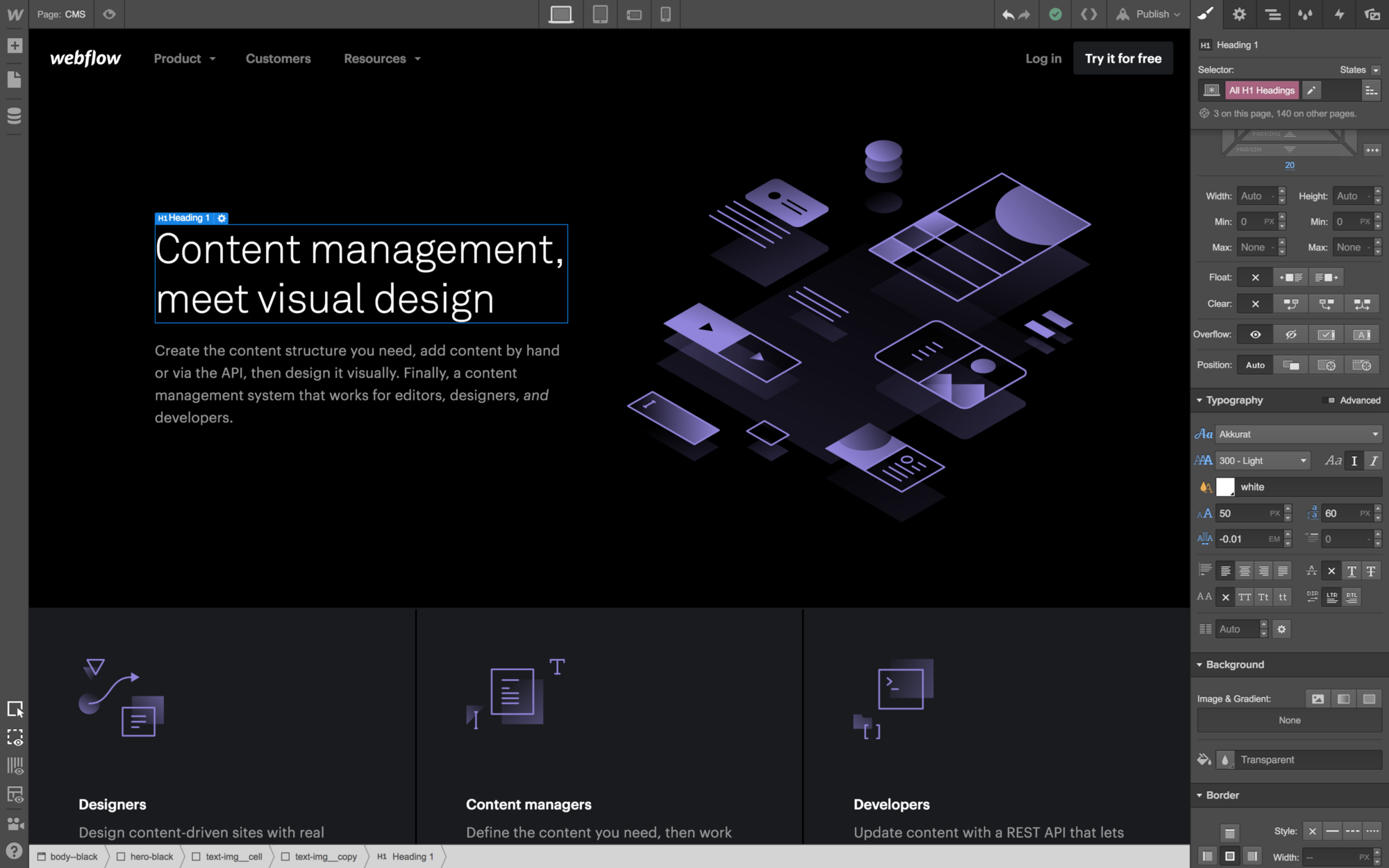

Web Designers

Can see their design, real time

Webflow

Web Developers

Can see right away the violin is white where it needs to be brown

Dreamweaver

Digital Magazine Designers

Can read a magazine at work

InDesign

Copywriters & bloggers

Markdown on the left, live preview on the right

Ghost

Modern Bloggers

Need to have a basic design sense and see their design preview

Medium Series (art: Guardian of the Bountiful Spring by Kunkka & Leo by genicecream)

Sound Designers

Can hear audio output, they don’t guess how that beat feels in a song

Flux FX

Vloggers, YouTubers

Use their cameras’s front/flip screen to keep their handsomeness in check (and shoot a crisp picture for us)

Game Character Designers

Know their character’s inner thoughts and even can see it in context of the game

3D Animators

Can make a timid eye contact with their furry character

Autodesk Maya

3D Modeling Artists

Will know right away if one eye of the lizard is missing or something hasn’t been transl… created

Cheetah 3D

Architects

First build computer models, then construct buildings (because any reworking would be way too costly)

AutoCAD

And…

Video Game Localizers

Have no clue how long their translation is allowed to be, nor whether “psychic attacks” requires following plural rules, nor what “perfect size” refers to:

SDL Trados

In the best of cases, their tool allows to see where the original string appears in the game (but no way to see the translation):

Source: Sony’s LAMS

Concept Visual Localization App

In the mockup below, it’s clear to the localizer that the cat-boy speaks about the hat. Now, they can play with this string (the impersonal “it” can be replaced with “the hat”) and make it the sweetest line it can be — since clearly there’s plenty of room on the screen

Looks like regular subtitling software… But it’s so much more — it’s that futuristic, hi-tech, yet to be invented visual localization app

Today, it seems only back-end devs, unicorns (designers who code), and game localizers are forced to work in the spartan beauty of their text-based UIs.

Dev’s code soup vs. localizer’s tag/placeholder soup

But the real problem being the strings that are taken out of context: Localizers are never fully sure about the success of the whole effort — until all the job will be compiled for a test run (and more reruns) and debugged.

Visual video game localization is not genome sequencing. The technology is there, somewhere, one needs to simply start building